Power your performance at scale

Products

Advanced computing products, designed for performance, sovereignty and energy efficiency.

We’re glad you’re here. We’re entering an exciting new chapter of innovation, technology leadership, and digital transformation. There’s plenty to explore already, and we’ll keep adding insightful content with new stories, industry focus, and experiences. Stay tuned, this is just the beginning.

.webp?width=450&height=191&name=1-BULL_LOGO_BASELINE-COLOR_RGB%20(1).webp)

Bull delivers next-gen computing and AI while advancing sustainability and regional autonomy.

![]()

Advanced computing products, designed for performance, sovereignty and energy efficiency.

![]()

Solutions that turn computing power into tangible outcomes for your organisation.

![]()

End-to-end services to design, deploy and operate your advanced computing and AI environments.

![]()

Bull powers the world with exascale-ready high-performance computing, AI, and quantum innovations, driven by the most advanced data and artificial intelligence capabilities.

![]()

Bull delivers full stack, end-to-end solutions, seamlessly integrating hardware, software, and services for advanced computing at scale.

![]()

Bull brings together data, AI, and engineering expertise to turn collective excellence into meaningful innovation.

![]()

Bull leads in responsible sustainability through efficient manufacturing, product design, and operations, built on open standards that create universal access.

![]()

Bull guarantees a true alternative with European excellence and technologies to empower regional sovereignty and autonomy.

A national lab accelerated climate modeling simulations by 5× with Bull's next-generation HPC cluster and optimised software stack.

An automotive manufacturer cut prototype cycles from months to days using our AI-enhanced HPC environment.

A government agency deployed a sovereign AI platform built on Bull infrastructure to secure and localise sensitive workloads.

We have established strong, enduring links with leading technology and data partners across the full innovation stack, spanning semiconductors, accelerators, cloud platforms, software, and data ecosystems. From R&D through to sales, marketing, and delivery, these partnerships ensure our customers benefit from the most advanced, optimised, and efficient solutions available.

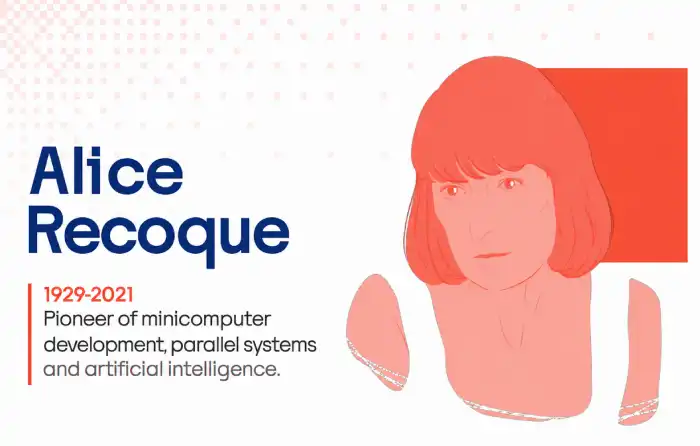

For nearly a century, from Eviden to Bull, we have empowered nations and critical industries to fully control their computational and data assets, turning their biggest challenges into reality while ensuring strategic autonomy.